Blueberry vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Blueberry

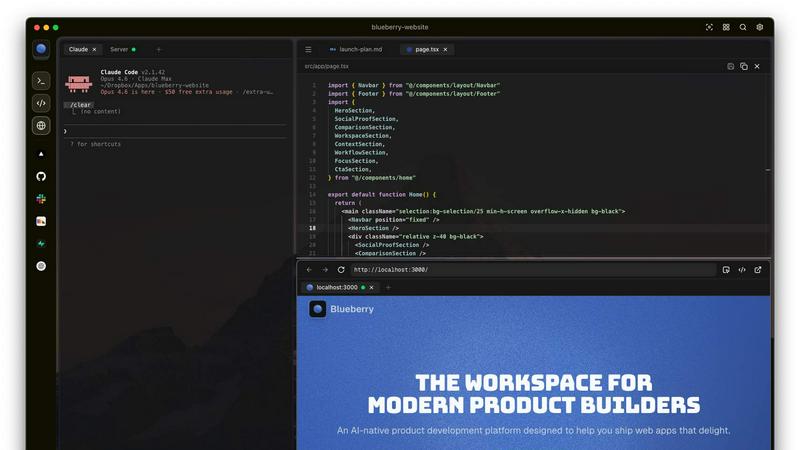

Blueberry is a unified Mac app that seamlessly integrates your editor, terminal, and browser for streamlined product.

Last updated: February 26, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

Blueberry

OpenMark AI

Overview

About Blueberry

Blueberry is an innovative macOS application meticulously designed for modern product builders, seamlessly integrating the functionalities of an editor, terminal, and browser into a single focused workspace. With Blueberry, developers can transcend the traditional chaos of juggling multiple tools and windows, instead fostering an environment where creativity and productivity flourish. This AI-native product development platform is tailored for those who aspire to ship web applications that not only meet but exceed user expectations. By connecting advanced AI models such as Claude, Gemini, and Codex through its proprietary MCP (Multi-Channel Protocol), Blueberry offers unparalleled context awareness, allowing AI to comprehend your entire project landscape in real-time. Thus, developers can bid farewell to cumbersome copy-pasting of context, making way for a more fluid and intuitive development process. As part of its commitment to accessibility, Blueberry is currently available for free during its beta phase, inviting pioneers and innovators to explore its transformative capabilities.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.