OpenMark AI vs qtrl.ai

Side-by-side comparison to help you choose the right product.

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

qtrl.ai

qtrl.ai empowers QA teams to scale testing efficiently with AI-driven agents while maintaining complete control and.

Last updated: March 4, 2026

Visual Comparison

OpenMark AI

qtrl.ai

Overview

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.

About qtrl.ai

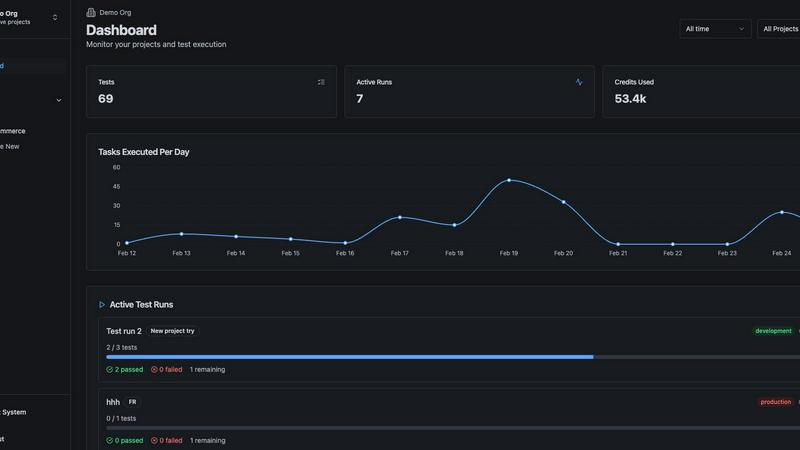

qtrl.ai is an innovative quality assurance platform that redefines the paradigm of software testing by merging robust test management capabilities with the transformative power of artificial intelligence. Designed specifically for software teams, qtrl.ai empowers organizations to scale their quality assurance processes without compromising on control or governance. Its centralized hub facilitates the organization of test cases, meticulous planning of test runs, and comprehensive tracking of quality metrics through real-time dashboards. This ensures that engineering leads and QA managers maintain clear visibility over testing progress, outcomes, and potential risks.

What sets qtrl.ai apart is its thoughtfully integrated AI layer, which allows teams to gradually embrace automation. Rather than imposing a risky, opaque AI-first methodology, qtrl.ai encourages teams to start with manual test management and evolve towards intelligent automation at their own pace. The platform's autonomous agents can generate UI tests from simple English descriptions, adapt them as applications progress, and execute them at scale in diverse environments. With its mission to bridge the chasm between cumbersome manual testing and the fragility of traditional automation, qtrl.ai is perfectly suited for product-led engineering teams, QA groups transitioning from manual testing, organizations modernizing legacy workflows, and enterprises that demand stringent compliance and audit trails. Ultimately, qtrl.ai provides a trusted, intelligent pathway to accelerate quality assurance.