Agenta vs Fallom

Side-by-side comparison to help you choose the right product.

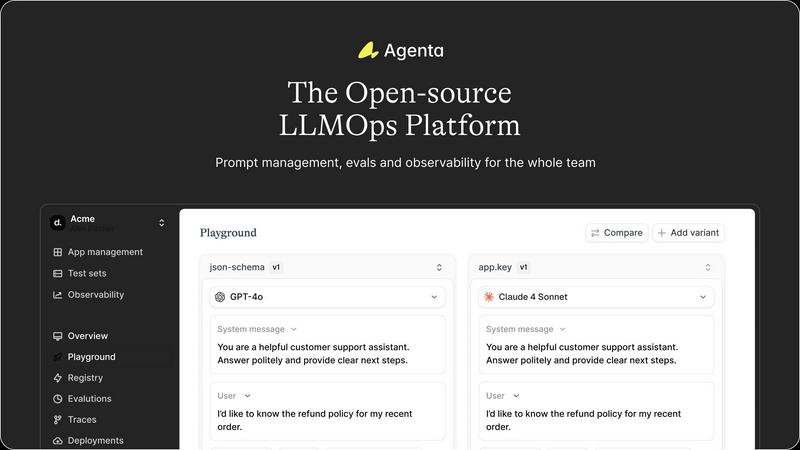

Agenta is an open-source LLMOps platform that unifies teams to build reliable AI applications with streamlined.

Last updated: March 1, 2026

Fallom delivers comprehensive observability for LLM applications, ensuring real-time insights and cost transparency for.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Management

Agenta consolidates all elements of LLM development, including prompts, evaluations, and traces, into one cohesive platform. This centralization eliminates the chaos of disparate tools and provides a streamlined approach to managing the entire LLM lifecycle.

Unified Playground

With Agenta's unified playground, teams can compare different prompts and models side-by-side, allowing for comprehensive analysis and informed decision-making. This feature supports quick iterations and fosters an environment of continuous improvement.

Automated Evaluation

Agenta facilitates a systematic process for running experiments and tracking results through automated evaluations. By integrating various evaluators—including LLM-as-a-judge and custom code evaluators—teams can validate changes with confidence and precision.

Observability and Debugging

With robust observability features, Agenta enables teams to trace every request and identify exact failure points within their AI systems. This functionality enhances debugging capabilities, allowing teams to annotate traces collaboratively and turn any trace into a test with a single click.

Fallom

Real-Time Observability

Fallom provides exceptional real-time observability for AI agents, allowing users to track tool calls, analyze execution timing, and debug interactions with precision. This feature ensures that every LLM call is visible, facilitating immediate insights into performance and potential issues.

Comprehensive Cost Attribution

With Fallom, organizations can meticulously track spending across models, users, and teams, achieving full cost transparency. This feature simplifies budgeting and chargeback processes, enabling teams to allocate resources effectively and optimize operational expenditures.

Enterprise-Grade Audit Trails

Fallom is designed with compliance at its core, offering complete audit trails that support regulatory requirements such as the EU AI Act, SOC 2, and GDPR. This feature includes input/output logging, model versioning, and user consent tracking, ensuring organizations are always audit-ready.

Session Tracking and Contextual Grouping

Fallom enables users to group traces by session, user, or customer, providing essential context for each interaction. This feature enhances the ability to analyze behavior patterns and performance metrics, allowing for improved decision-making and operational oversight.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for teams seeking to enhance their collaborative efforts in prompt development. By providing a shared platform, teams can experiment, compare, and version prompts collectively, thereby improving the overall quality of their LLM applications.

Systematic Evaluation Processes

Organizations can leverage Agenta to implement systematic evaluation processes for their AI models. By tracking results and validating every change, teams can ensure that their models are continuously improving and meeting performance benchmarks.

Debugging and Trace Management

When issues arise, Agenta provides the tools necessary for effective debugging. Teams can trace requests, identify failure points, and gather user feedback, thus enabling a rapid response to problems and fostering a culture of continuous improvement.

Integration with Existing Workflows

Agenta seamlessly integrates with existing tools and frameworks, such as LangChain and OpenAI, making it an invaluable asset for organizations looking to enhance their LLMOps capabilities without disrupting their current workflows.

Fallom

Monitoring AI Operations

Organizations can utilize Fallom to monitor their AI operations in real-time, gaining insights into each interaction involving LLMs and AI agents. This proactive monitoring helps identify issues before they escalate, ensuring smooth operations and optimal performance.

Compliance and Regulatory Support

Companies operating in regulated industries can leverage Fallom’s comprehensive audit trails and privacy controls to meet stringent compliance requirements. This use case is particularly valuable for organizations needing to adhere to regulations such as GDPR and the EU AI Act.

Cost Management and Optimization

Fallom's cost attribution feature allows businesses to closely track their spending on various AI models and tools, facilitating better budget management. Organizations can analyze usage patterns and make informed decisions about resource allocation and cost optimization.

Debugging Complex Workflows

With its intuitive timing waterfalls and session tracking capabilities, Fallom is ideal for debugging complex workflows involving multiple steps and interactions. It enables teams to pinpoint latency issues and optimize their AI agents for enhanced performance.

Overview

About Agenta

Agenta is the definitive open-source LLMOps platform meticulously crafted for sophisticated AI teams intent on developing and deploying reliable, production-grade LLM applications. In an era where the complexities of large language model (LLM) development often lead to chaos—characterized by scattered prompts, siloed teams, and unvalidated deployments—Agenta emerges as a beacon of order and efficiency. This platform not only centralizes the entire LLM development lifecycle but also fosters collaboration among developers, product managers, and domain experts. With Agenta, organizations can transform their fragmented workflows into structured, evidence-driven processes. By providing a single source of truth, it enables teams to experiment with prompts and models, conduct systematic evaluations, and debug issues with unparalleled precision. Agenta empowers organizations to replace guesswork with governance, ensuring the delivery of innovative and reliable AI products that meet market demands while upholding the highest quality standards.

About Fallom

Fallom stands as the definitive observability platform meticulously crafted for the sophisticated demands of intelligent applications in today’s AI landscape. With a focus on delivering unparalleled real-time visibility, Fallom empowers engineering and product teams to gain insights into every interaction involving Large Language Models (LLMs) and AI agents within their production environments. In an era where AI operations often remain shrouded in opacity and are fraught with high costs, Fallom breaks down these barriers by illuminating the entire lifecycle of each API call. It captures critical data points including prompts, outputs, tool executions, token usage, latency, and exact per-call costs, enabling comprehensive monitoring and analysis. Beyond mere observation, Fallom offers session-level context, intuitive timing waterfalls for complex multi-step agents, and enterprise-ready audit trails, ensuring compliance with regulatory mandates. With OpenTelemetry-native SDK integration, teams can effortlessly instrument their applications, fostering confidence in monitoring live usage, debugging intricate issues, and accurately attributing operational expenses across various models, users, and business units. Fallom transforms AI from an enigmatic black box into a transparent, manageable asset, paving the way for optimized performance and enhanced operational efficiency.

Frequently Asked Questions

Agenta FAQ

What types of teams can benefit from Agenta?

Agenta is designed for a diverse range of teams, including developers, product managers, data scientists, and domain experts. Its collaborative features unify these roles, enhancing communication and workflow efficiency.

How does Agenta enhance collaboration among team members?

Agenta provides a centralized platform where team members can share prompts, conduct evaluations, and debug issues together. This collaborative environment fosters transparency and encourages collective problem-solving.

Is Agenta suitable for both small and large organizations?

Absolutely. Agenta's scalable architecture makes it suitable for organizations of all sizes, from startups to large enterprises, enabling them to adopt best practices in LLMOps regardless of their scale.

Can I integrate Agenta with my existing LLM frameworks?

Yes, Agenta is designed to integrate seamlessly with various LLM frameworks and tools, allowing teams to build upon their existing infrastructure without facing vendor lock-in or unnecessary complications.

Fallom FAQ

What is Fallom and how does it work?

Fallom is an observability platform designed for intelligent applications that provides real-time visibility into LLM and AI agent interactions. It captures detailed data about API calls, enabling organizations to monitor performance, track costs, and ensure compliance.

How quickly can I set up Fallom?

Fallom boasts an effortless integration process with its OpenTelemetry-native SDK, allowing teams to start tracing their applications in under five minutes. This quick setup empowers organizations to begin monitoring live usage almost immediately.

Is Fallom compliant with regulatory standards?

Yes, Fallom is built to meet stringent regulatory requirements, offering comprehensive audit trails, model versioning, and user consent tracking. It supports compliance with various regulations, including GDPR and the EU AI Act.

Can I monitor multiple AI models and tools with Fallom?

Absolutely. Fallom supports comprehensive monitoring for all AI models and tools through a single SDK, providing a unified view of operations across different platforms without vendor lock-in. This flexibility enhances organizational agility in managing AI deployments.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that caters to sophisticated AI teams focused on developing reliable, production-grade LLM applications. As the definitive solution for managing the complexities inherent in modern LLM development, it offers a structured environment for collaboration among developers, product managers, and domain experts. Users often seek alternatives to Agenta due to various factors such as pricing constraints, feature sets that may not align with specific project needs, or the desire for integration with existing platforms. When considering alternatives, it is essential to evaluate the capabilities of a platform in terms of its support for collaborative experimentation, automated evaluation processes, and overall usability. Additionally, users should assess how well an alternative can centralize the LLM development lifecycle, ensuring a seamless transition from development to deployment while maintaining the integrity and reliability of AI applications.

Fallom Alternatives

Fallom is an enterprise-grade observability platform specifically designed for Large Language Model (LLM) applications and AI agents. It provides critical insights into the interactions within production environments, offering engineering and product teams a comprehensive view of the entire lifecycle of each LLM call. Users often seek alternatives to Fallom due to various factors such as pricing structures, feature sets, and specific platform requirements that may not align perfectly with their organizational needs. When exploring alternatives, it is essential to consider aspects such as the depth of observability, compliance capabilities, ease of integration, and the overall user experience. Organizations should prioritize solutions that offer robust tracking, intuitive debugging features, and the ability to scale effectively with their growing AI operations. A thorough evaluation of these factors will ensure that the chosen platform meets both immediate and long-term operational objectives.