Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right product.

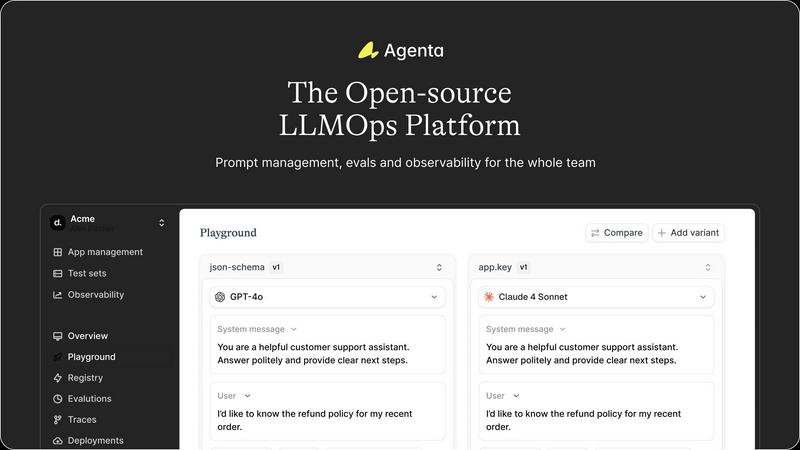

Agenta is an open-source LLMOps platform that unifies teams to build reliable AI applications with streamlined.

Last updated: March 1, 2026

OpenMark AI instantly benchmarks over one hundred LLMs on your specific task for cost, speed, and quality without requiring API keys.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Management

Agenta consolidates all elements of LLM development, including prompts, evaluations, and traces, into one cohesive platform. This centralization eliminates the chaos of disparate tools and provides a streamlined approach to managing the entire LLM lifecycle.

Unified Playground

With Agenta's unified playground, teams can compare different prompts and models side-by-side, allowing for comprehensive analysis and informed decision-making. This feature supports quick iterations and fosters an environment of continuous improvement.

Automated Evaluation

Agenta facilitates a systematic process for running experiments and tracking results through automated evaluations. By integrating various evaluators—including LLM-as-a-judge and custom code evaluators—teams can validate changes with confidence and precision.

Observability and Debugging

With robust observability features, Agenta enables teams to trace every request and identify exact failure points within their AI systems. This functionality enhances debugging capabilities, allowing teams to annotate traces collaboratively and turn any trace into a test with a single click.

OpenMark AI

Plain Language Task Configuration

Describe the exact task you need an AI model to perform—be it classification, data extraction, creative writing, or complex reasoning—using simple, natural language instructions. OpenMark's intelligent system interprets your intent and constructs the appropriate benchmarking prompts, removing the need for manual, error-prone prompt engineering. This allows you to focus on defining the problem domain rather than the technical intricacies of interfacing with each model's unique API and expected input format.

Multi-Model, Real-API Benchmarking

Execute your defined task against a meticulously curated catalog of over 100 leading models from providers like OpenAI, Anthropic, Google, and open-source communities in one coordinated session. Crucially, every test makes a live API call, ensuring you compare real latency, real costs, and real, current model performance—not cached or idealized marketing numbers. This delivers an authentic, apples-to-apples comparison under identical conditions.

Comprehensive Performance Analytics

Gain insights beyond simple pass/fail metrics with a detailed analytics dashboard. View side-by-side comparisons of each model's quality score (as defined by your task), the actual cost per request, response latency, and token usage. This holistic view enables you to evaluate the true cost-efficiency of a model: the quality it delivers relative to the price you pay for each API call.

Variance and Stability Testing

Understand not just if a model can complete a task, but if it will do so reliably every time. OpenMark runs your prompts multiple times for each model, analyzing the variance in outputs. This reveals consistency—or a lack thereof—highlighting models that may produce a stellar result once but fail unpredictably, a critical factor for production systems where stability is non-negotiable.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for teams seeking to enhance their collaborative efforts in prompt development. By providing a shared platform, teams can experiment, compare, and version prompts collectively, thereby improving the overall quality of their LLM applications.

Systematic Evaluation Processes

Organizations can leverage Agenta to implement systematic evaluation processes for their AI models. By tracking results and validating every change, teams can ensure that their models are continuously improving and meeting performance benchmarks.

Debugging and Trace Management

When issues arise, Agenta provides the tools necessary for effective debugging. Teams can trace requests, identify failure points, and gather user feedback, thus enabling a rapid response to problems and fostering a culture of continuous improvement.

Integration with Existing Workflows

Agenta seamlessly integrates with existing tools and frameworks, such as LangChain and OpenAI, making it an invaluable asset for organizations looking to enhance their LLMOps capabilities without disrupting their current workflows.

OpenMark AI

Pre-Deployment Model Validation

Before integrating an AI model into a live application or feature, product teams can use OpenMark to validate its performance on the exact tasks it will handle. This mitigates the risk of post-launch failures, unexpected costs, or poor user experience by providing empirical evidence that the chosen model meets the specific requirements for quality, speed, and budget.

Cost-Efficiency Optimization for Scaling Applications

For applications already using AI, OpenMark is instrumental in optimizing operational costs at scale. Developers can benchmark alternative, potentially more cost-effective models against their current solution to identify opportunities for reducing per-request expenses without sacrificing output quality, ensuring sustainable growth as user volume increases.

Building Reliable RAG and Agentic Systems

When constructing Retrieval-Augmented Generation pipelines or multi-agent workflows, the choice of LLM for routing, synthesis, or final answer generation is paramount. OpenMark allows architects to test candidate models on representative chunks of their actual logic, ensuring selected models provide consistent, accurate, and contextually appropriate outputs that maintain the integrity of the entire system.

Comparative Research and Academic Study

Researchers and analysts can leverage OpenMark's structured environment to conduct controlled, reproducible studies on model capabilities across different providers and model families. The platform's ability to run identical prompts across many models and measure multiple dimensions of performance makes it an invaluable tool for generating unbiased, comparative insights into the evolving AI landscape.

Overview

About Agenta

Agenta is the definitive open-source LLMOps platform meticulously crafted for sophisticated AI teams intent on developing and deploying reliable, production-grade LLM applications. In an era where the complexities of large language model (LLM) development often lead to chaos—characterized by scattered prompts, siloed teams, and unvalidated deployments—Agenta emerges as a beacon of order and efficiency. This platform not only centralizes the entire LLM development lifecycle but also fosters collaboration among developers, product managers, and domain experts. With Agenta, organizations can transform their fragmented workflows into structured, evidence-driven processes. By providing a single source of truth, it enables teams to experiment with prompts and models, conduct systematic evaluations, and debug issues with unparalleled precision. Agenta empowers organizations to replace guesswork with governance, ensuring the delivery of innovative and reliable AI products that meet market demands while upholding the highest quality standards.

About OpenMark AI

OpenMark AI is the definitive platform for empirical, task-level benchmarking of large language models. It transforms the complex, often speculative process of model selection into a precise, data-driven science. Designed for developers, product teams, and AI engineers, it eliminates the guesswork from deploying AI features by providing side-by-side comparisons grounded in real-world performance. The core value proposition is elegant in its simplicity: describe your specific task in plain language, and OpenMark executes it against a vast catalog of over 100 models in a single, unified session. You receive comprehensive metrics on scored quality, actual API cost per request, latency, and—critically—output stability across multiple runs. This last dimension reveals variance and consistency, ensuring decisions are based on reliable performance, not a single fortunate output. By operating on a hosted credit system, it removes the administrative burden of managing multiple API keys, offering a seamless gateway to objective truth in a landscape often clouded by marketing claims and fragmented testing.

Frequently Asked Questions

Agenta FAQ

What types of teams can benefit from Agenta?

Agenta is designed for a diverse range of teams, including developers, product managers, data scientists, and domain experts. Its collaborative features unify these roles, enhancing communication and workflow efficiency.

How does Agenta enhance collaboration among team members?

Agenta provides a centralized platform where team members can share prompts, conduct evaluations, and debug issues together. This collaborative environment fosters transparency and encourages collective problem-solving.

Is Agenta suitable for both small and large organizations?

Absolutely. Agenta's scalable architecture makes it suitable for organizations of all sizes, from startups to large enterprises, enabling them to adopt best practices in LLMOps regardless of their scale.

Can I integrate Agenta with my existing LLM frameworks?

Yes, Agenta is designed to integrate seamlessly with various LLM frameworks and tools, allowing teams to build upon their existing infrastructure without facing vendor lock-in or unnecessary complications.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score for a model's output?

OpenMark employs a sophisticated, task-aware evaluation system. For many standard tasks, it can use automated metrics or LLM-as-a-judge scoring against your defined success criteria. For highly custom evaluations, you can implement manual scoring or rubric-based checks within the platform. The score reflects how well the output meets the specific objectives of your described task, not a generic capability.

Do I need API keys for the models I want to test?

No, one of OpenMark's primary advantages is that it abstracts away API key management. You operate using OpenMark credits. The platform handles all the underlying API calls to the various model providers on your behalf. This simplifies setup dramatically and allows for instantaneous testing across competitors without configuring multiple accounts.

What is meant by testing "stability" or "variance"?

Stability testing refers to running the same prompt against the same model multiple times (in parallel or sequentially) and analyzing the differences in the outputs. A model with low variance produces very similar, high-quality results each time, which is crucial for production. High variance indicates unpredictability, where a model might give a perfect answer once but a poor or irrelevant one the next, representing a significant operational risk.

Can I benchmark private or fine-tuned models?

The current public catalog focuses on widely available, hosted foundation and proprietary models from major providers. For benchmarking private, fine-tuned, or self-hosted models, you would typically need to integrate them via a compatible API endpoint, which may be available through enterprise or custom plans. The platform's architecture is designed to accommodate a wide range of model sources.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that caters to sophisticated AI teams focused on developing reliable, production-grade LLM applications. As the definitive solution for managing the complexities inherent in modern LLM development, it offers a structured environment for collaboration among developers, product managers, and domain experts. Users often seek alternatives to Agenta due to various factors such as pricing constraints, feature sets that may not align with specific project needs, or the desire for integration with existing platforms. When considering alternatives, it is essential to evaluate the capabilities of a platform in terms of its support for collaborative experimentation, automated evaluation processes, and overall usability. Additionally, users should assess how well an alternative can centralize the LLM development lifecycle, ensuring a seamless transition from development to deployment while maintaining the integrity and reliability of AI applications.

OpenMark AI Alternatives

OpenMark AI is a sophisticated, browser-based benchmarking platform within the developer tools category. It enables teams to empirically evaluate over one hundred large language models on their specific tasks, comparing critical metrics like cost, latency, quality, and output stability through real API calls. Users may explore alternatives for various reasons, such as differing budgetary constraints, a need for deeper integration into existing CI/CD pipelines, or a preference for self-hosted solutions that offer greater data control. The search often stems from a desire to find a tool that aligns precisely with their technical architecture and operational philosophy. When considering other solutions, discerning teams should prioritize platforms that deliver genuine, uncached performance data across a broad model catalog. The ideal tool should provide transparent insights into cost-for-quality trade-offs and consistency, moving beyond simplistic token pricing to inform truly strategic deployment decisions.